Designing a scalable system for displaying metrics

Creating a reusable framework for displaying performance data across dashboards

- scope

- Define and design a reusable framework and components for presenting data across a range of dashboards and use cases

- role

- Lead Product Designer

- team

- 1x backend developer, 1x frontend developer

Context

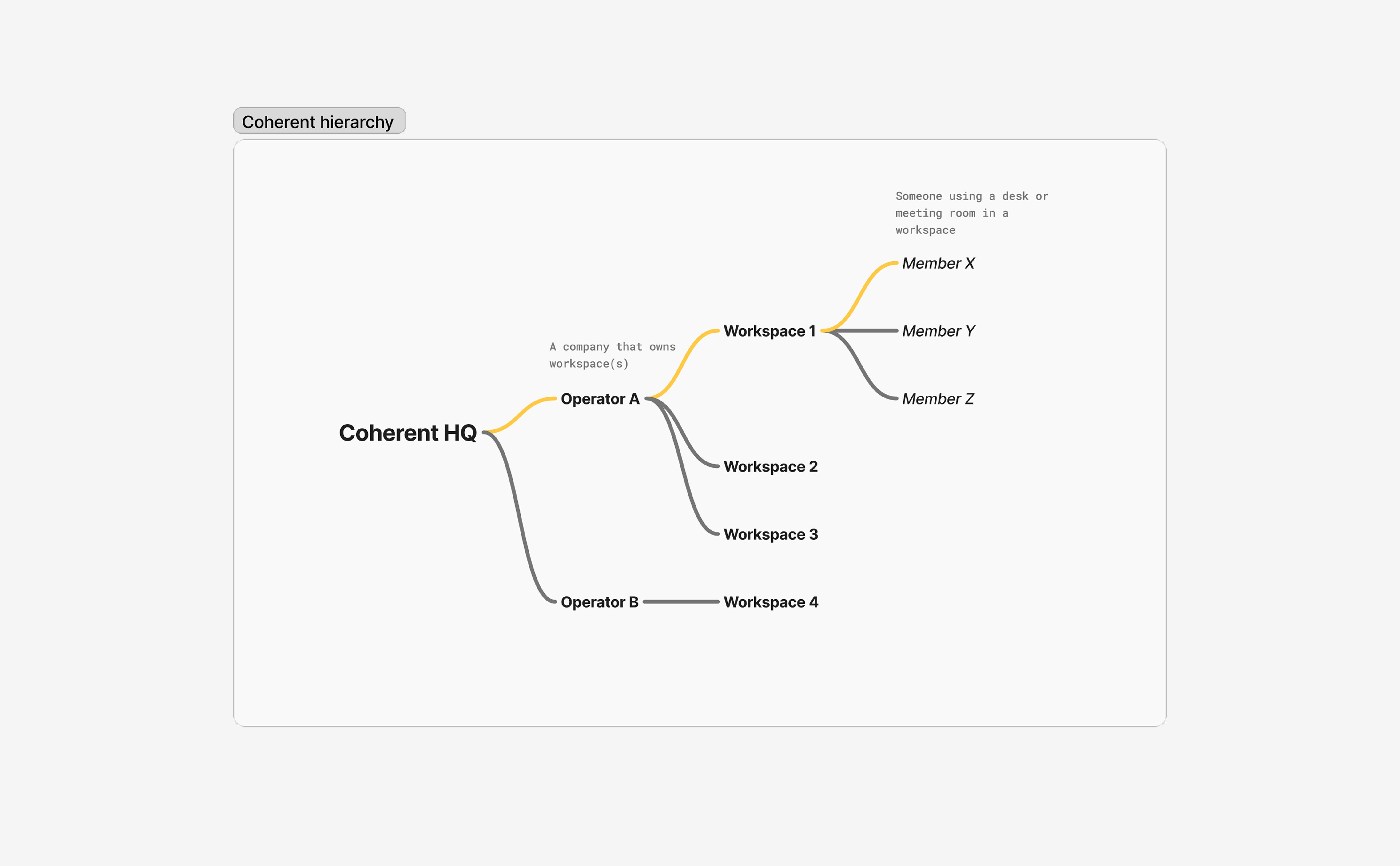

Coherent had an HQ-level dashboard used by the business team to view customer information, including team details, company data, and billing information such as invoices.

Coherent billed customers on a percentage basis, calculated from the total value of invoices processed through the system each month. Here’s a diagram to explain the hierarchy in the system

For example, Member X pays £100/month for their membership at Workspace 1. Members Y and Z do the same, as do members at Workspaces 2 and 3. Coherent calculates the total value of these invoices under Operator 1 and charges them a percentage of that amount

This meant revenue from an operator could fluctuate month to month. While invoice data could be exported and reports generated, there were no clear in-app metrics to show revenue for a particular Operator over a specific period

This issue extended into the Workspace dashboard too - some staple metrics weren’t visible and could only be found by exporting data and manipulating it manually

Our business team wanted a quick, at-a-glance view of:

- How long someone had been a customer

- Their lifetime value

- Monthly or yearly revenue, and how it compared to previous periods

Speaking to workspaces, I found they had similar requests, particularly for their Leads CRM (invoice metrics already existed in the workspace dashboard). They wanted clearer visibility on how many leads had been added in a given period, how many had converted, and how this compared to similar periods

From this, I identified an opportunity to improve how we delivered metrics to users, and define a scalable, reusable approach that could support different dashboards, user roles, and future needs across the product

The problem

The biggest issue was that key data was buried in exports, meaning significant effort was required to answer even simple business questions. This slowed decision-making and made it harder for teams to make decisions with confidence

For the HQ business team to do their best work, they needed a clear understanding of their customers. Missing or hard-to-access metrics meant their workflows were more manual and time-consuming than they needed to be

It was a similar story for Workspaces. They could open their CRM dashboard, see new leads, and get a rough sense of growth - but if they wanted to know with certainty how performance compared month to month, they had to manually analyse their exported data

We knew we had the data we needed to solve these problems. The challenge was making it available in a way that was useful and easy to interpret, without cluttering dashboards. Different users also had different needs - some cared about long-term trends, while others were more interested in short-term performance - which meant any solution needed to be flexible as well as clear

Goals

The goals for this project were

- Enable users to answer questions quickly, without relying on exports or manual analysis

- Support customisation without introducing complexity or clutter

- Develop a reusable set of patterns and components for displaying metrics across the product

- Ensure metrics were clear, consistent and trustworthy

Exploration

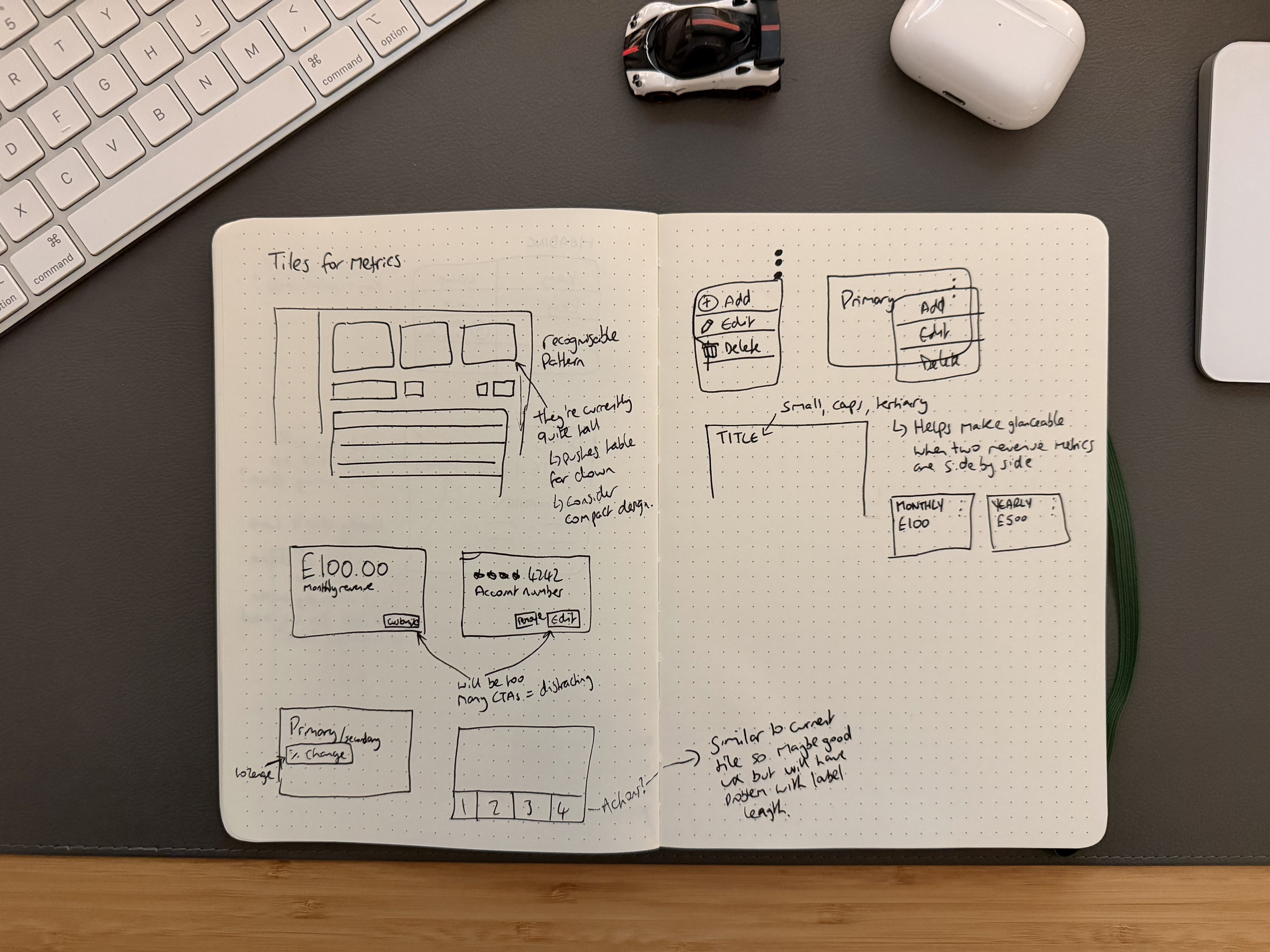

I approached this as a systems problem - not just designing individual metrics, but establishing patterns that could scale across the product

Components

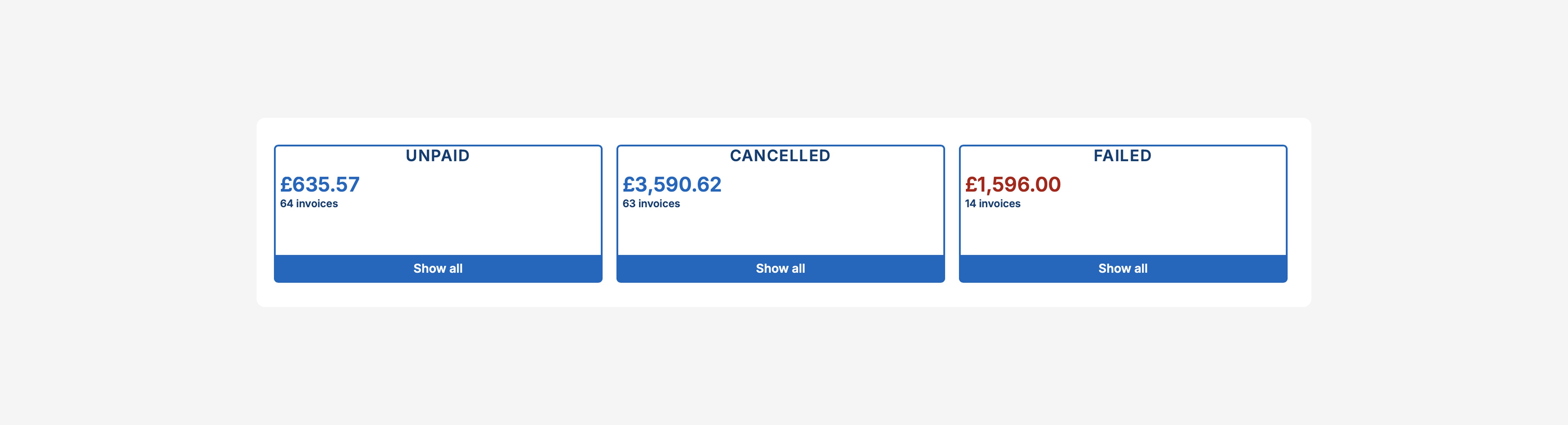

We already had a pattern for displaying at-a-glance metrics - showing three or four tiles at the top of the page - so I chose to reuse this pattern to maintain consistency between dashboards.

The design of our existing tile components had become outdated however, and weren’t being used to their full potential. Data wasn’t presented as clearly as it could be and there were accessibility issues with text colours and sizes.

I saw an opportunity to rework the component to make the presentation of data more impactful, while maintaining the familiar pattern so as to not disrupt the user experience. These tiles were one of the first components I had ever designed, so it was an exciting change to revisit the work and reflect on how my approach and skills had evolved

A key goal was to ensure the solution could be easily reused across different pages without requiring manual overrides. To understand what flexibility was needed, I reviewed the data displayed in existing tiles and the additional functionality that they offered - the 'Invoices' tiles included a 'Show all' button that revealed invoices with a specific status, for example.

From this, I explored how we could create a more uniform and standardised structure, with clearly defined areas for primary metrics, secondary data and optional actions. This would allow tiles to adapt to different use cases while remaining consistent and predictable for users

I considered how to balance density and priority, ensuring key metrics stood out without overwhelming users

Customising metrics

Whilst discussing this work with customers, an interesting challenge emerged around how metrics should be configured, particularly in the Leads CRM

In one case, an Operator’s COO oversaw performance across multiple workspaces and had a set of key metrics they want teams to focus on - for example, leads added in the last 7 days or leads converted this month. They wanted to ensure workspace hosts had visibility of these metrics to maintain alignment across the organisation

At the same time, hosts often needed to see more situational metrics. For example, if they were running a marketing campaign, they might want to track leads generated during that period and compare that performance against a previous campaign period

This created a tension between standardisation and individual flexibility

My initial approach was to allow a collection of metrics to be set in the Workspace Settings, allowing the leadership team to define the default metrics that would be visible to all hosts. It was equally important however that hosts could adapt their dashboard to support their day-to-day work without affecting others

Working closely with our backend developer, we devised a way for default metrics to be set in the Workspace Settings, whilst still allowing hosts to customise tiles individually. Their preferences were stored in a session cookie, allowing hosts to tailor their view during their workflow, without permanently overriding organisational defaults. When the cookie expired, the dashboard would revert to the Workspace defaults

This approach balanced alignment and flexibility, ensuring dashboards remained both consistent and personally useful

Comparison periods

In requests from our business team, it wasn’t enough to just show revenue for a selected period - they also needed to understand how that value compared to other periods. For example, they wanted to see how revenue for the current period compared to the same period last year, or how performance compared to a previous peak

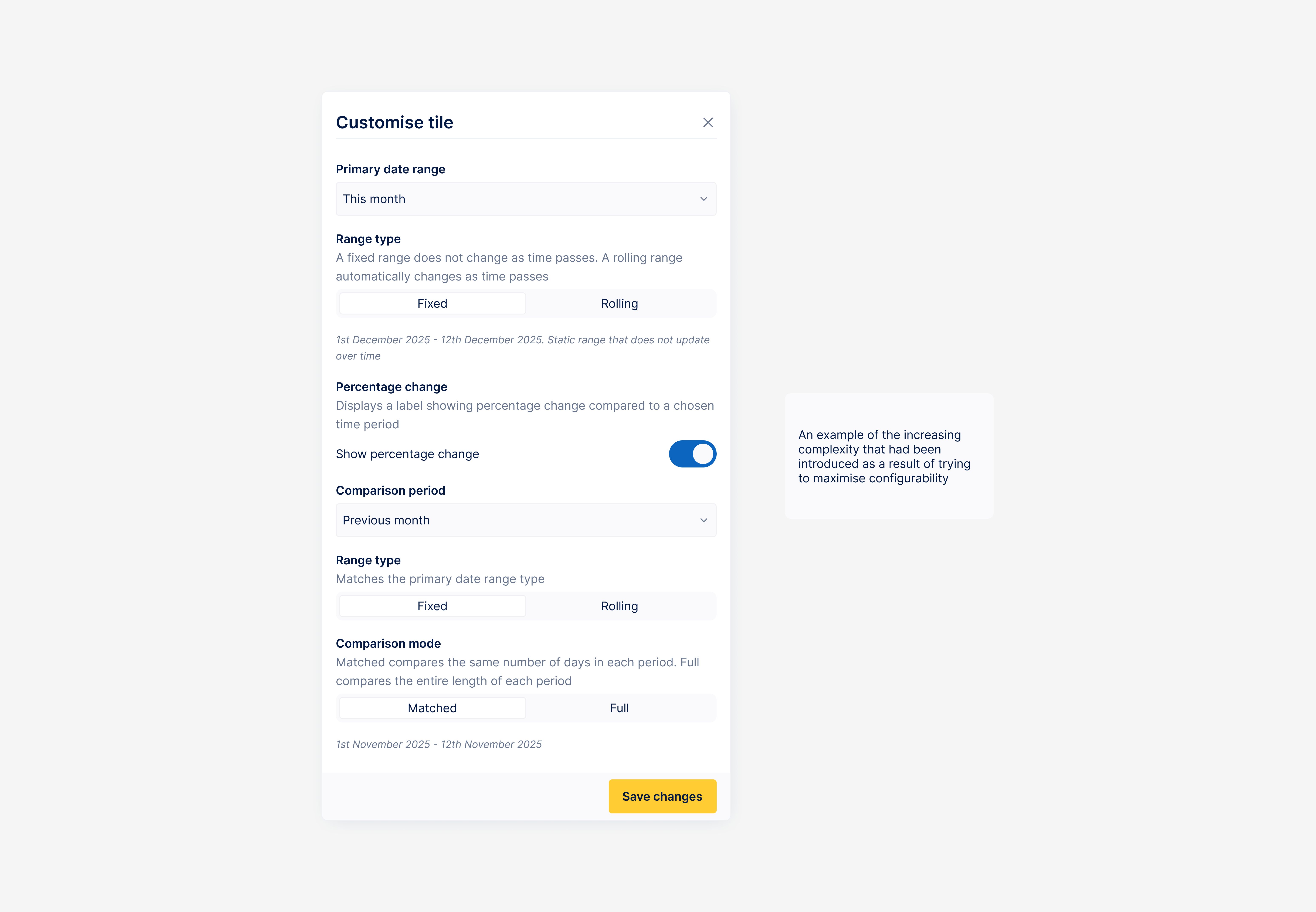

Because the solution needed to be reusable across different areas of the app, I focused on designing a comparison feature that was flexible enough to support a range of use cases. This proved to be more complex than expected

I started with a simple approach: if "Revenue last month" was selected as the primary metric, the available comparisons would be limited to relevant options such as "Revenue this month" or "Revenue previous month"

However, this immediately raised three key questions

- How should comparisons behave when the current period is still in progress?

- Should the user be able to compare periods of different lengths - for example, a month to a quarter?

- Should date ranges behave as rolling windows or fixed periods?

After reviewing solutions in other analytics tools, I introduced a Comparison mode to address the first question. Using a segmented-control, users could choose between

- Matched - the comparison period mirrors the active period (for example, 1st-10th February vs 1st-10th January)

- Full - the comparison uses complete periods (for example, 1st-10th February vs 1st-28th January)

Allowing comparisons between periods of different lengths was less of a technical challenge and more a question of user experience. While comparing “this month” to “last year” might not always produce meaningful insights, I chose to prioritise flexibility - enabling hosts to explore comparisons that were relevant to their context. For example, during a particularly strong month, they might want to compare performance against the previous quarter.

The final consideration was how date ranges should behave over time. If a user selected "Last month", should that value remain fixed based on when the preference was set, or update automatically as time moves forward? I explored introducing an additional control to let users choose, but became concerned about increasing cognitive load - the form for customising metrics was becoming crowded with options and conditional behaviour.

As configuration options expanded, it also became increasingly complex for our backend developer to calculate comparison values reliably, introducing potential risk to the accuracy and trustworthiness of the metrics.

Given that most users were not analytics specialists, I chose to make date ranges behave as rolling periods. This aligned with user expectations and kept the experience simple and predictable

Design principles

As the scope expanded, the challenge shifted from solving individual interface problems to defining principles that could guide how metrics should work across the product. These principles helped align design decisions and ensured the system could scale without becoming overly complex

- Clarity over configurability

- Trust through consistency

- Flexibility with guardrails

- Metrics should answer questions quickly

Key design decisions

With these principles in place, I focused on translating them into concrete design decisions across the system

Designing a standardised tile component

The final tile component was structured with a clear hierarchy; a large primary metric, an optional comparison label (for something like percentage change), and a smaller secondary metric or supporting context. Actions were now accessed via a kebab menu.

Establishing a predictable structure meant users could quickly interpret new metrics without relearning the interface.

Each tile included a title to provide context and help differentiate between similar, neighbouring tiles. To ensure titles remained accurate, some were dynamic, updating as the metric was customised (for example, changing from Revenue this month to Revenue this year)

I chose to remove the "Show all" buttons that previously appeared at the bottom of some tiles. These buttons primarily created filters - functionality that didn’t exist when the tiles were originally released. Now that we had a dedicated filtering feature, removing them freed up space and allowed data to be presented more clearly

For tiles that still supported actions, I moved these into a kebab menu in the top right corner. While this introduced an extra click for users to get to the actions, it ensured actions were consistently located across all tiles, making them easier to learn and predict

This approach also gave us room to grow. New actions could be easily added into the menu, without affecting the layout or visual balance of the tile itself

Prioritising clarity over configurability

It was a challenge to balance offering flexible configuration and maintaining a clear, approachable experience. As additional options were considered - such as fixed versus rolling date ranges - the configuration model became increasingly complex for both users and the development team.

I chose to prioritise clarity by limiting the number of controls and adopting sensible defaults, such as rolling date ranges and a simplified comparison model. This reduced cognitive load and ensured users could quickly understand what they were seeing without needing to interpret underlying date logic.

This decision also helped maintain confidence in the metrics by reducing implementation complexity and the risk of calculation errors. While this meant not supporting every possible configuration, it ensured the dashboard remained intuitive, trustworthy, and focused on answering common questions effectively.

Balancing organisational defaults with personal flexibility

Exploration revealed a tension between maintaining a consistent view of performance, defined by leadership, and allowing hosts to tailor metrics to their workflows. To address this, I decided to support organisational defaults defined in Workspace Settings, alongside personal customisation at the tile level.

This ensured dashboards communicated shared priorities while still enabling users to explore data relevant to their context. Storing personal preferences locally allowed flexibility without introducing additional configuration complexity

Outcome

Although this feature never made it to production due to team redundancy, the work established a clear direction for how metrics could be handled across Coherent.

More importantly, it defined a scalable foundation - shared patterns, comparison logic and configuration principles - that could support future dashboards without reinventing solutions each time

Reflection

This project proved more complex than initially anticipated. Designing a system that was flexible enough to support a variety of use cases while remaining simple and understandable required careful tradeoffs.

A key learning was recognising when additional configurability begins to introduce unnecessary complexity. In hindsight, I spent too long exploring solutions that could accommodate every scenario, which risked increasing cognitive load for users.

The experience reinforced the importance of balancing flexibility with clarity, and of making deliberate decisions to simplify when complexity does not meaningfully improve outcomes.

Even without release, this project strengthened my approach to designing systems rather than isolated features - thinking about how decisions scale, how complexity accumulates, and how clarity can be preserved as products grow. It reinforced the value of designing not just for immediate needs, but for systems that can evolve as products and teams grow.